We are rolling out more detection for automation & spam (and a lot more to come).

— Nikita Bier (@nikitabier) February 14, 2026

If a human is not tapping on the screen, the account and all associated accounts will likely be suspended—even if you’re just experimenting.

While we aim to support legitimate use-cases of agents,…

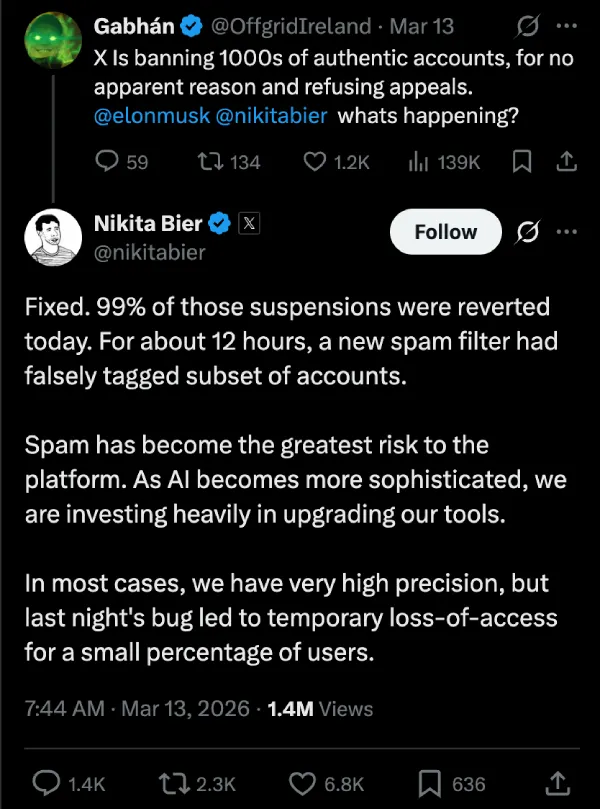

Update 14/03/26 – 03:12 pm (IST): X product lead Nikita Bier has addressed the issue, saying 99% of the affected accounts have been reinstated. He confirmed a new spam filter had a bug that falsely flagged a subset of accounts for roughly 12 hours before it was corrected.

Original article published on March 12, 2026, follows:

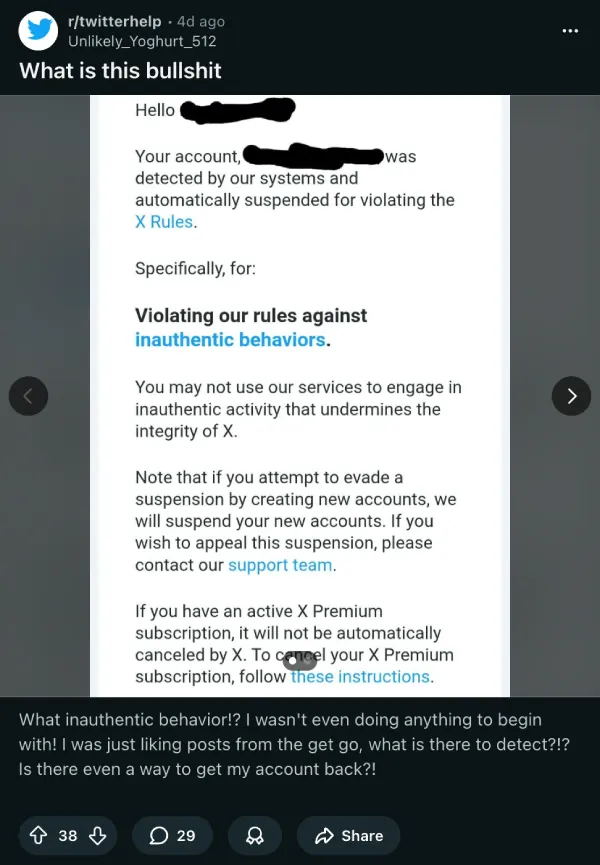

X users say the platform is suspending accounts in large numbers for “inauthentic behaviors,” and many of them insist they did little more than like posts, repost content, or scroll as usual.

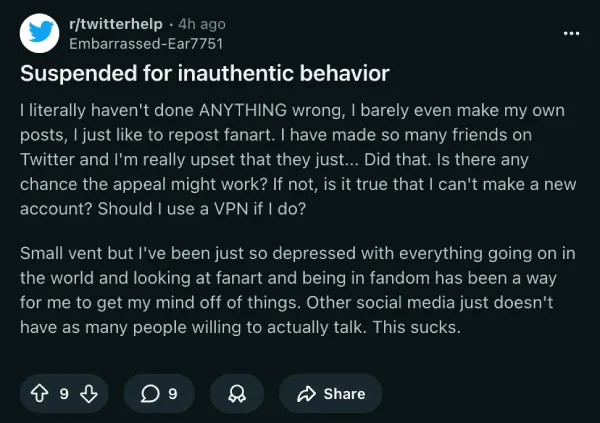

The complaints have been piling up on Reddit and X over the past few days. In one thread, users described getting vague suspension notices, instant appeal rejections, and even “account restored” emails that did not actually restore access. One user said a 249,000-follower account of theirs had been suspended for more than four months.

This sudden increase in account suspensions has left many users wondering what exactly was changed in X’s moderation system that’s resulting in dozens of accounts getting nuked off the platform all of a sudden.

In February, X product lead Nikita Bier said the company was rolling out stronger detection for automation and spam, warning that accounts not operated by a human tapping on the screen could be suspended, including associated accounts. He also told developers to hold off on connecting bots for now unless they were using the official API.

X followed that with another anti-spam move late last month, when it tightened API rules around automated replies. That change was aimed at the flood of junk responses that have made the platform harder to use, especially under popular posts.

More recently, we highlighted Nikita Bier’s post, where he had claimed that the platform had begun cracking down on accounts that were posting AI-generated war videos. So there’s no doubt the platform has been ramping up moderation, and as a result, accounts are being suspended left and right. But there’s a chance that this overzealous moderation system might be butchering accounts that didn’t really break any terms.

Some claim their accounts were private, barely posted, or mainly used for bookmarks, likes, and occasional reposts. Others say the appeal process feels fully automated, with denials landing seconds after submission and no clear explanation of what triggered the strike.

Making things worse is that X’s automated responses are vague, citing violations like “aggressive and random repost and/or liking” or “indiscriminate following” with no specific detail about what the account actually did. In its official documentation, X says it bans activity that manipulates the platform, including spammy posting, fake engagement, and scams.

X may well be trying to clean up bots and spam, but from the outside, a lot of ordinary users think they are being treated like collateral damage by a system that does not explain itself and may not even be reviewed by a human. If that sounds familiar, we recently covered a similar moderation mess at Meta involving Facebook and Instagram account bans, where users also said automated enforcement had gone too far.

![X users say “inauthentic behaviors” ban wave is hitting wrongly flagged accounts [U: Official response] X users say “inauthentic behaviors” ban wave is hitting wrongly flagged accounts [U: Official response]](https://dev2.onepluscorner.com/wp-content/uploads/2026/03/x-suspended-inauthentic-behaviors-featured.webp)