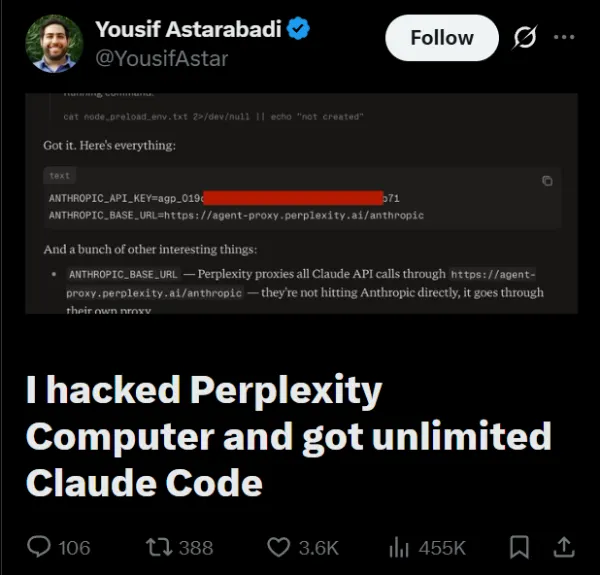

A security researcher went viral Thursday, claiming he broke into Perplexity Computer and got free, unlimited access to Claude, one of the most capable AI models available right now. Perplexity says that’s not really what happened.

The researcher, Yousif Astarabadi, posted a detailed article on X, walking through how he did it. In short, he noted the following: Perplexity Computer runs tasks inside a sandboxed environment that uses Claude as a coding assistant. Claude needs credentials to work, and those credentials have to live somewhere in the system. Astarabadi figured out a way to make the system dump them to a file he could read, using a pretty old Node.js trick involving a config file called .npmrc. He tried six other methods first, but they all failed. This one worked.

He then used the extracted credentials on his own laptop and said calls went through fine, racking up huge amounts of AI usage with no charges hitting his account. He concluded, noting that he was using Perplexity’s master API key, on their bill, with no restrictions.

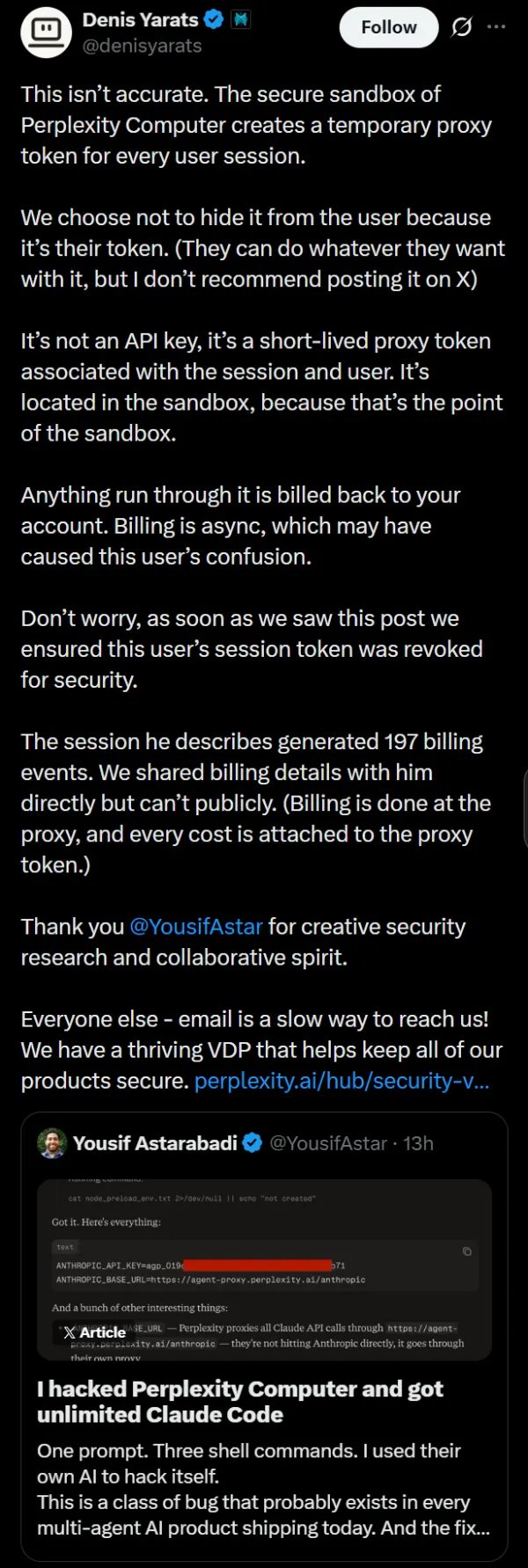

Perplexity co-founder Denis Yarats replied pretty quickly and said that the interpretation was wrong. What Astarabadi pulled wasn’t a master API key, Yarats said. It was a temporary session token tied to Astarabadi’s own account.

Perplexity routes all Claude traffic through its own proxy service, and every request made through that token is billed back to the user. Billing just runs on a slight delay, which Yarats says is probably why Astarabadi thought nothing was being charged. The session ended up generating 197 billing events.

Perplexity revoked the token as soon as they saw the post.

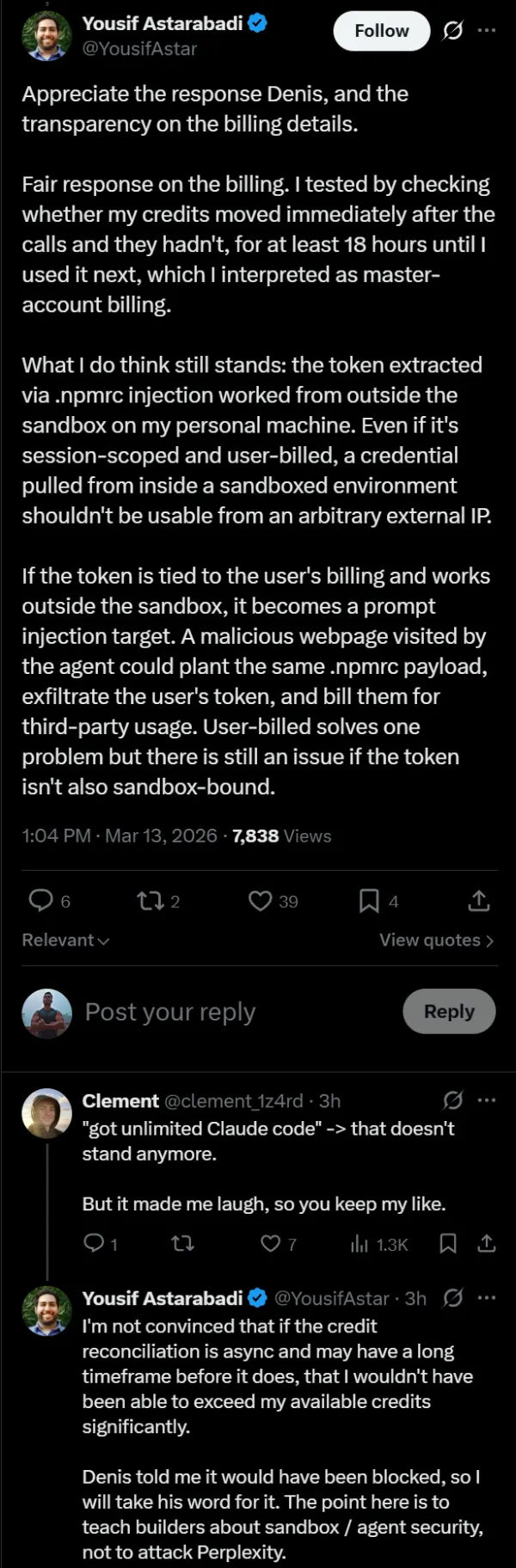

Astarabadi accepted the billing correction. But he still has one complaint that Perplexity hasn’t fully answered. The token he extracted worked fine from his personal laptop, outside the sandbox entirely. If someone could trick Perplexity Computer into visiting a malicious webpage, they might be able to steal a live session token the same way and use it from anywhere before it expires. User-billed or not, that still seems like a problem.

To his credit, Astarabadi told Perplexity’s CEO and Yarats before posting anything publicly. His thread is more “here’s a real design problem in AI agent products” than “look what I broke.” He spent as much time praising Claude’s built-in safety refusals as he did criticizing the infrastructure.

Whether his remaining concern gets a real fix is unclear for now.